Foundation Models in Astronomy: Why They Matter

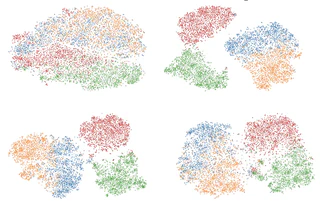

Foundation models — large neural networks pre-trained on massive datasets — are starting to transform astronomical data analysis. Models like DINOv2 or CLIP, originally trained on natural images, can be fine-tuned for astronomical tasks with surprisingly good results. At EAS 2024, I presented early comparisons of these models on galaxy morphological classification, and my GRETSI 2025 paper digs into how to best specialize DINOv2 for astronomy.

The key challenge is the domain gap: astronomical images (multi-band, high dynamic range, specific noise) look nothing like everyday photos. Choosing the right fine-tuning strategy turns out to matter a lot — and is the core focus of my current work.

On the multimodal side, I am involved in the UniverseTBD collaboration, where I contribute to AstroLLaVA — a vision-language model for astronomy presented at ICLR 2025.